Three SDKs from OpenAI, Cursor, and Amazon dropped in the same week. And I cannot stop thinking about the implications for the future of AI products and delivery.

OpenAI and Amazon are partnering at the service delivery level to make their models sticky for enterprise clients.

With these moves, they all shipped their version of the same bet: the harness is the product now.

What’s a harness, you might ask?

I cover that today.

If you build AI products and you missed this week, you might have missed the architectural shift that defines 2026.

Here’s the signal from the noise.

OpenAI shipped a production-grade agent harness with sandbox execution built in

The Agents SDK got native sandbox execution, durable state via snapshotting and rehydration (if a container dies, the agent picks up from its last checkpoint), and orchestration primitives for multi-agent handoffs. Python SDK already has 25K stars. TypeScript just dropped.

Robert’s Take: The sleeper feature is durable execution. Long-running agents that survive container restarts without losing state. That solves the #1 production reliability issue we’ve been fighting at Clarity. Every AI builder I talk to has hit this wall. OpenAI just paved a path through it.

Amazon Bedrock now runs the OpenAI agent harness natively

Launched less than 24 hours after OpenAI’s Microsoft exclusivity ended. Enterprise teams on AWS can now run the full OpenAI agent harness, including Codex and GPT-5.5, inside their existing compliance and billing infrastructure. IAM, PrivateLink, guardrails, encryption, CloudTrail logging. All of it.

Robert’s Take: This is the signal that the harness play is about distribution, not just technology. OpenAI built the best agent harness and now they’re planting it inside the infrastructure where 80% of enterprise workloads already live. If you’re an AI builder doing enterprise work, AWS partnership certification just became a real acquisition channel.

Cursor released their entire agent runtime as a TypeScript SDK

npm install @cursor/sdk. That’s it. You now have programmatic access to the same harness that powers Cursor’s desktop app. Codebase indexing, MCP servers, skills, hooks, subagents. Run locally or in Cursor’s cloud VMs. Rippling, Notion, Faire, and C3 AI are already shipping with it.

Robert’s Take: This is harness-as-a-service. Cursor took their internal agent runtime, the one that beats Opus on SWE-bench using an open-source model, and made it a product you can embed. Notion is using it to go from bug in their issue tracker to merged PR without an engineer touching code. That’s the self-healing codebase everyone talks about, actually working in production.

Arize’s Chief Product Officer wrote the clearest definition of “harness” vs “framework” I’ve read

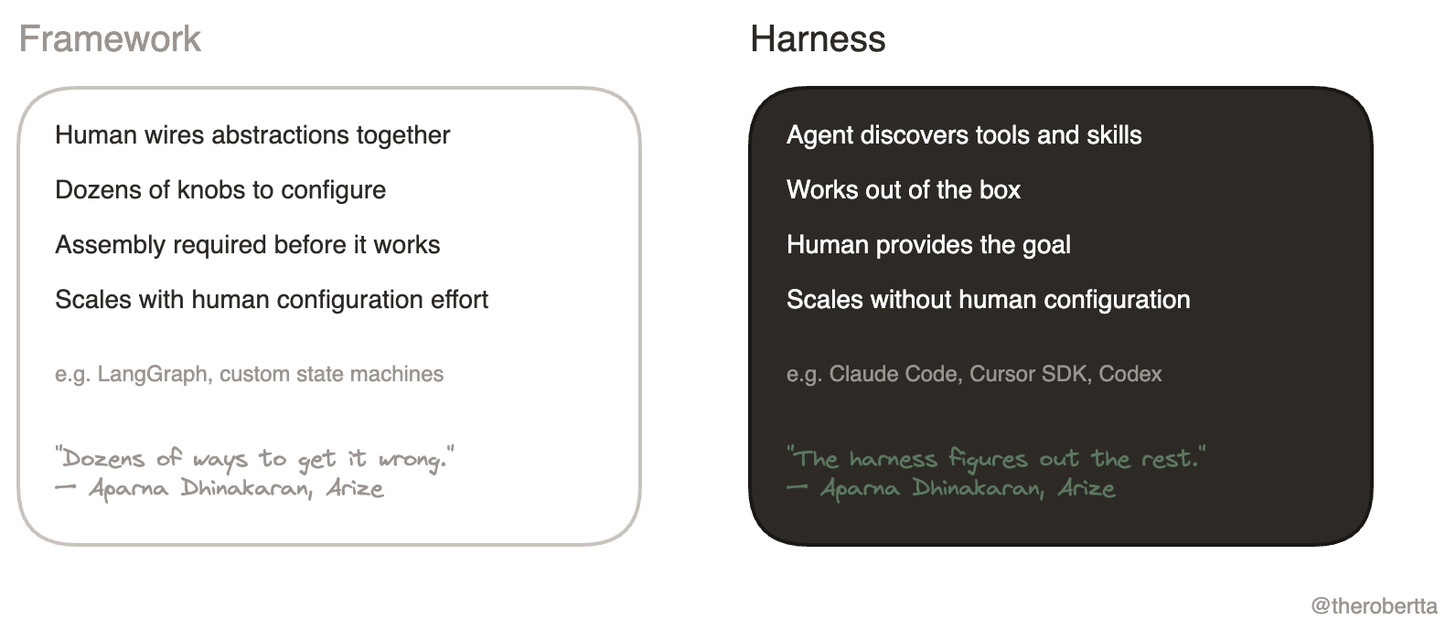

Aparna Dhinakaran drew a hard line: Frameworks ask humans to wire abstractions together. Harnesses work out of the box. The model reads instructions, discovers tools, composes skills, spawns subagents. The human provides the goal. The harness figures out the rest.

Robert’s Take: This distinction matters for your architecture decisions right now. If you’re configuring a state graph by hand, you’re building a framework. If your agent reads a CLAUDE.md file and starts working, you’re using a harness. The harness wins because it scales without proportional human configuration effort. Pick accordingly.

Anthropic published a postmortem showing how harness bugs silently destroyed Claude Code quality for 6 weeks

Three compounding harness-level bugs: reasoning effort accidentally downgraded (March 4), a session-clearing bug that wiped context every turn (March 26), and a verbosity-reduction prompt that hurt coding quality (April 16). All fixed by April 20. The model was fine. The harness decisions broke the user experience.

Robert’s Take: This is the most important postmortem in AI engineering this year. Same model, same capabilities. Three harness configuration changes tanked the product. It validates everything the harness engineering conversation is about. The model is not your bottleneck. Your harness decisions are. Treat them with the same rigor you’d treat a production deployment.

What is a harness? (And why you should care)

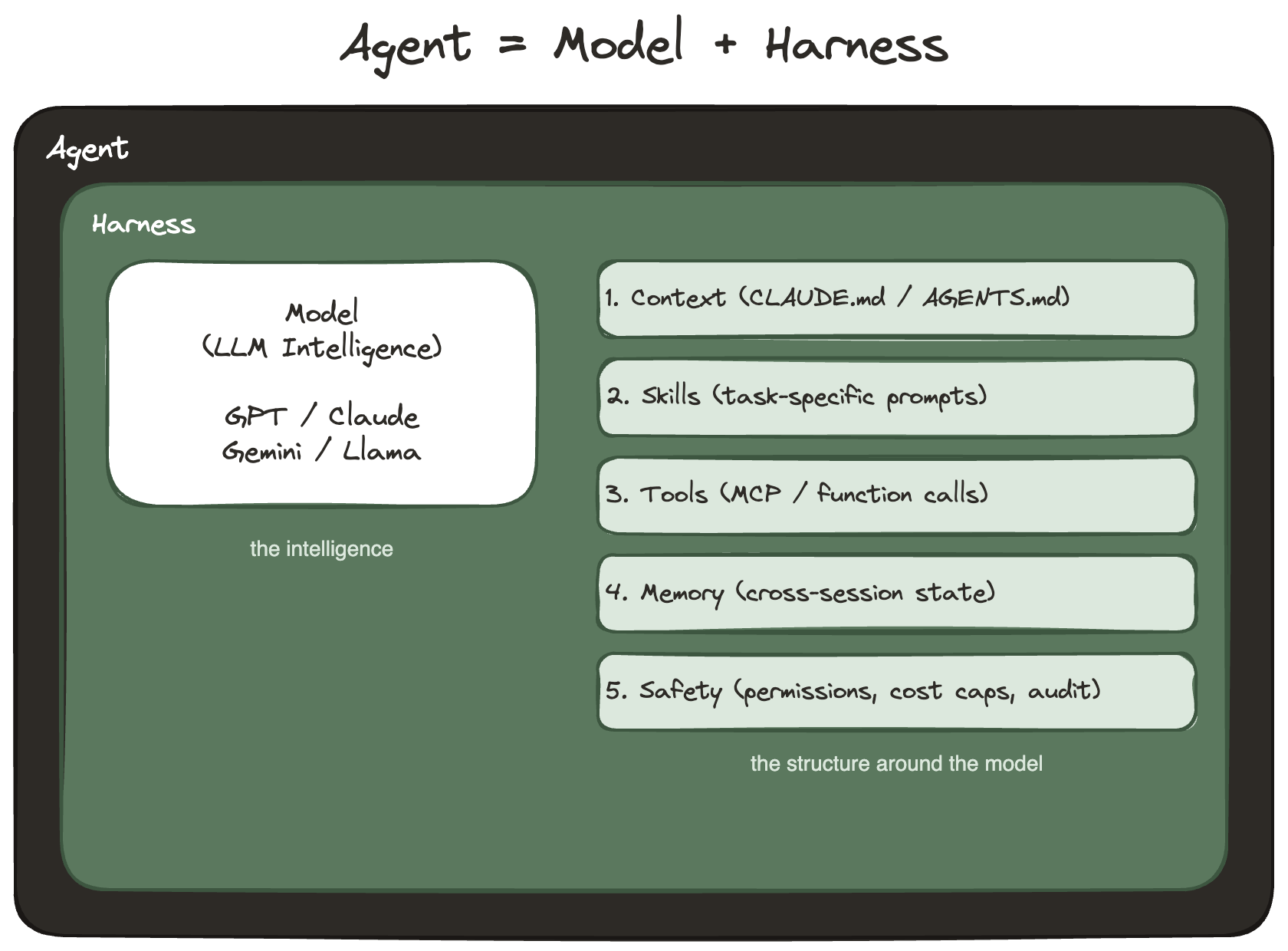

Agent = Model + Harness.

Easy enough equation.

Think of it like a race car.

The engine is the AI model. GPT-5.5, Claude, Gemini. Raw power. Every team has access to the same engines.

The harness is everything else: the chassis, the suspension, the telemetry, the pit crew protocols, the tire strategy. The system that translates raw engine power into winning races.

Two teams can run the same engine. The one with the better harness wins.

In AI terms: the harness is every piece of code, configuration, and execution logic that wraps around the model. Memory systems that persist across sessions. Sandbox environments where agents run code safely. Tool registries that let agents discover and use capabilities. Context management that decides what information the model sees. Orchestration that coordinates multiple agents. Evals that verify the output meets your quality bar.

The model provides intelligence. The harness makes that intelligence useful.

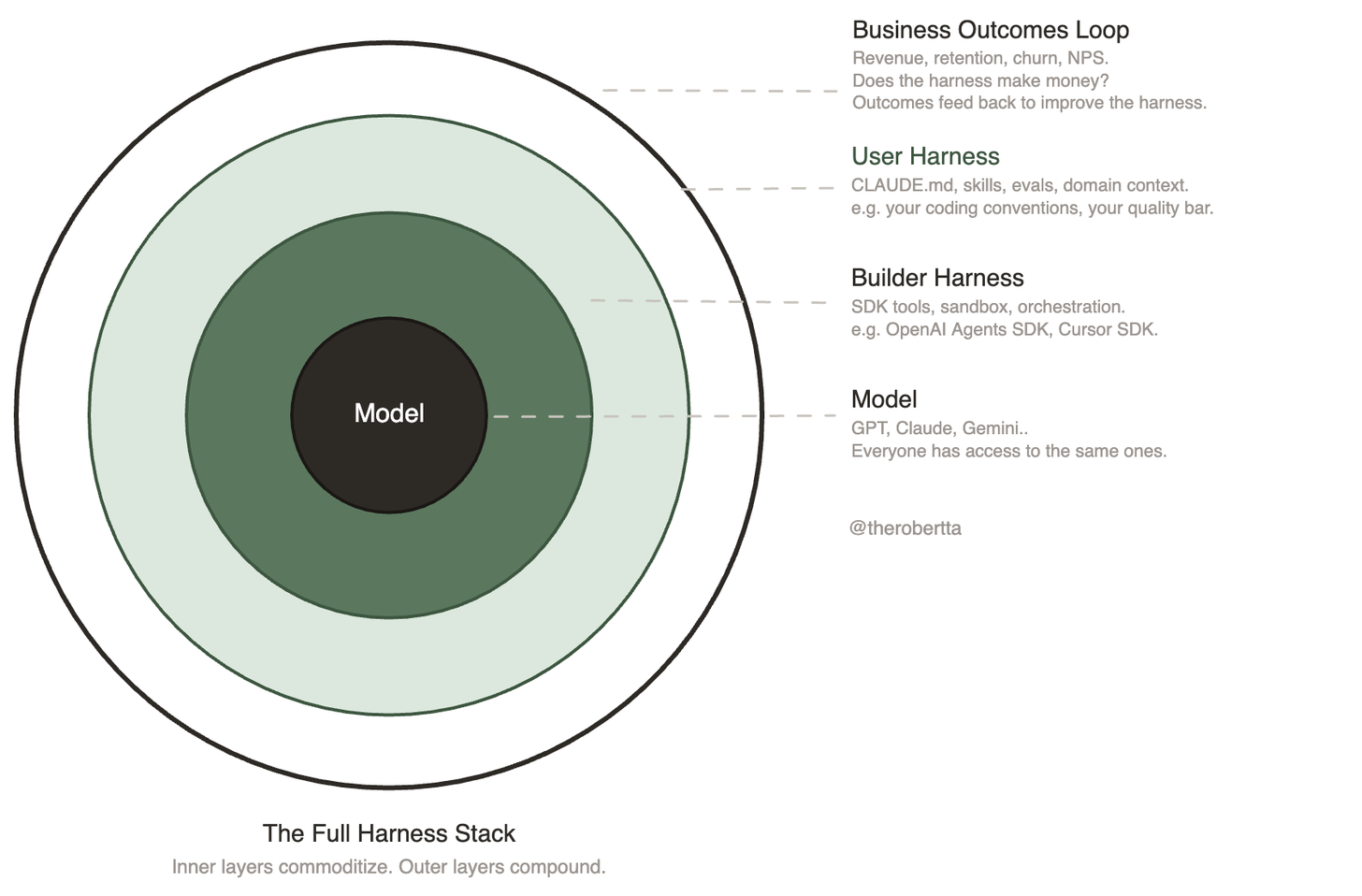

Martin Fowler visualized this as concentric rings: the model at the center, the builder’s harness around it, your custom harness around that.

I extend his model with a fourth outer ring: business outcomes.

All that we do when creating software products (and now AI products) MUST be informed by real world market signal.

The inner rings are commoditizing.

Everyone will install the same OpenAI SDK or Cursor SDK. The outer ring is your moat: does your harness connect to real business outcomes? Revenue, retention, churn. Is the agent’s work making you money? Is the signal from those outcomes feeding back into the harness to make it better tomorrow?

That’s where my Walking Dead thesis meets harness engineering. The walking dead have models. Some even have harnesses. None of them have closed the loop to business outcomes. They ship features, but they don’t measure whether those features compound domain understanding. The outermost ring sits empty.

The companies that survive will fill that ring.

The convergence

Three SDKs. One week. Same bet.

OpenAI released sandbox agents with durable execution. Cursor released their entire agent runtime as an npm package. Amazon released managed agents on Bedrock. In the same seven days.

When three companies independently ship the same architectural pattern in the same week, that’s not coincidence. That’s convergence on a market truth.

Harness engineering is graduating from a discipline into a product category.

The vocabulary that’s becoming standard

OpenAI’s SDK codifies the terms we’re all going to be using. Here’s the quick reference:

- Agent: LLM-powered worker configured with instructions + tools. The primary unit of work.

- Harness: Everything around the model: orchestration, tools, memory, filesystem, context management.

- Sandbox: Isolated compute where agent-generated code runs without access to host credentials or production.

- Handoff: Agent-to-agent task delegation. The orchestrator routes to specialists.

- Guardrails: Input/output validation enforcing safety, content rules, formatting.

- Tracing: Built-in observability. Automatic recording of the full execution graph.

- AGENTS.md: Convention file defining agent instructions, constraints, and context. Loaded at startup.

- Durability: Snapshotting + rehydration. Agent state persists across container restarts.

- Skills: Capability modules loaded on-demand via progressive disclosure.

- Blast Radius: Scope of potential damage from unsafe execution. Sandbox contains it.

And their memory taxonomy, which I think is especially useful:

- Task Memory: Current execution run only

- Project Memory: Persists across runs within a workspace

- User Memory: Stored preferences, style, recurring constraints

- Policy Memory: Governance rules (“never do X”), compliance

These are the terms your team needs to align on. Learn them now.

What harness-as-a-service means

For the past year, “harness engineering” meant: you build the infrastructure around your model yourself. Memory. Context management. Tool orchestration. Self-verification. Sandbox execution. You stitch it together, you maintain it, you debug it when it breaks.

That era is ending.

The new era: you buy a harness that works out of the box.

My prediction is that this pattern will proliferate and become a mainstay.

Cursor’s SDK release proved it.

Cursor gives you codebase indexing, semantic search, MCP servers, skills, hooks, and subagents in a single npm install. OpenAI’s gives you sandbox execution, durable state, orchestration, and tool registries. Both ship as working agents, not as pieces you have to assemble.

Aparna from Arize articulated the distinction perfectly: a framework asks a human to wire abstractions together. A harness works from the moment you install it.

Continue reading

Get the full newsletter, free.

Join founders and builders who read Self Aligned every week.

Why this matters for AI builders right now

Jonathan and I talked through the implications on this week’s pod shoot (will be out soon on YouTube, Spotify, and Apple Podcasts so subscribe on your favorite platform if you want highly considered and informed takes on the world of AI).

Three things became clear:

One. Switching costs are dropping fast. Cursor’s SDK runs in a day for production teams (Faire reported going live in under 24 hours). OpenAI’s primitives snap into existing AWS infrastructure without new compliance reviews. The cost of adopting, or switching, harnesses is approaching zero.

That means your moat is not your harness implementation. Your moat is what your harness knows about your domain. The context it encodes. The evals it enforces. The skills it accumulates.

Two. I see a harness marketplace forming in the future. If harnesses work out of the box for specific use cases, someone will sell them. OpenAI releases open standards. Teams build domain-specific harnesses on those standards. The standard gets sticky. Other teams buy those harnesses instead of building from scratch.

Jonathan put it well: “You could say we have this harness for sale. You can run this version of agents within it. We’re going to see these big players come out with their versions and specialized niche versions.”

Health agent harnesses. Legal agent harnesses. Financial agent harnesses. Each encoding domain-specific skills, tools, and evals that took months to build. Sold as packages.

Three. The competitive dynamic just shifted. Cursor beat Claude Opus on SWE-bench using an open-source model (Kimi 2.5) with a better harness. That proved the harness matters more than the model for coding tasks.

Now Cursor is selling that harness. And OpenAI is selling theirs as a platform. The next frontier: which harness wins for which domain?

What’s interesting to me is the different strategic plays:

Cursor is going super vertical.

OpenAI is going horizontal.

Both make sense given their positions.

The connection to the Walking Dead thesis

I wrote about this a few weeks ago in The Walking Dead of Enterprise Software. The moat question is the same: what does your company understand that is genuinely hard to understand, and does that understanding compound daily?

Harness-as-a-service (my working terminology) makes this question sharper. If your agent’s harness is a commodity SDK anyone can install, your differentiation has to live somewhere else. It lives in:

- Domain context: the accumulated understanding of your specific customers, workflows, and edge cases

- Evals: the quality bar you’ve defined and enforced, trained on your failures

- Skills: the institutional knowledge encoded over months of production operation

- The closed loop: the system that captures outcomes, feeds them back, and compounds learning

The walking dead companies, the ones that are still investing in their UX/UI as the moat, are the ones who think the harness IS the moat.

Not quite in my view.

The harness is infrastructure, and the information that flows through it is the moat.

The companies that survive will be the ones running a closed loop: agent acts, outcome measured, learning captured, agent improves, repeat. The harness enables the loop.

The domain knowledge compounds inside it.

What to do this week

If you’re building AI products:

Read the Arize article. It defines the vocabulary. Then read the LangChain anatomy post for the component model. Then look at the Cursor SDK and OpenAI Agents SDK docs side by side.

Ask yourself: am I building a framework (human-configured state graphs) or a harness (agent-driven, works out of the box)? If you’re still in framework territory, the convergence this week should make you uncomfortable. The market is moving toward harnesses. Fast.

If you run a consultancy or forward-deployed engineering practice: the AWS partnership program now accepts agent harness case studies.

Distribution!

Two anonymized case studies get you on the marketplace.

This is a real acquisition channel for enterprise deal flow.

And if you’re an enterprise leader evaluating your AI strategy: the question is no longer “should we build agents?”…

It’s “which harness do we standardize on?”

OpenAI’s (general-purpose, AWS-native) or Cursor’s (coding-specific, works today) or both.

That’s the architectural decision that compounds.

Build in public update

Here are a few key things we’ve made progress on in the startup lately:

- Clarity API Update: Jonathan and I have made progress on what we call a “stacked harness architecture”, essentially helping to improve the developer experience. Kudos to him for synthesizing this into a coherent direction for evolving Clarity API, which is our solution to help AI builders with their context engineering and management to compound context at scale across AI apps.

- Ops: Starting to move our accounting to accruals, so we can get the best picture of where our resources are going for service delivery as forward deployed engineers, vs. an IRR for product SKUs we are baking to bring to market. Kind of the less exciting but absolutely necessary investment focus I think we need to succeed long term. I’m learning to nerd out about it after getting headaches thinking through my chart of accounts and all that. Slowly becoming a better CEO over time. I’m in this for the long game so I gotta love being a student of the game.

- Distribution: I’ve been finding success in building up our referral / affiliate program where I give a finder’s fee for warm intros converting into a sale, to better sell B2B AI services (make your AI better) + software (Clarity API). We’re building a more reliable pipeline of qualified B2B leads now. Founder-led sales cycles are still taking 1-4 months depending on the size of the company. But at least I’m not setting/getting appointments anymore and I can just start closing after an intro. Higher leverage for my time. I recently wrote about a cold outbound campaign I automated with AI and I’m getting a couple bites there a month too. Highly worth it. I also see a path for PMF for Clarity API if we keep playing our cards right, taking the forward deployed engineer approach. More on this in later issues as our customer development cycles evolve.

On a personal note:

- Physical health: Recovering from my recent low iron state, I’ve been supplementing more and been dialing in my running training again. Originally my 100 mile ultramarathon would be this month, but I’m kicking it out to August to accommodate my recovery period. It’s been tough not going all out like I usually do in training, and tempering my expectations. On the flip side, I’ve been peaking in my rock climbing and sending harder problems than ever. I tend to go through this cycle where I work my body a hell of a lot, then it breaks down just a tiny bit, then I’m forced to recover, then I PR. Such as it is. Feeling good, going to ramp my weekly miles up to 50-70 again in preparation for the target of 100 mi unsupported ultra in August 2026! Hope it goes!

- Mental & emotional health: Had a good therapy appointment recently to better figure out boundaries around family, life, love, and dreams. I feel like we’re all figuring that out. I was telling my Co-Founder about it, and he said “Wow you cover a lot of ground in therapy.” It made me wonder how other people approach their own therapy sessions. I approach it like business: I come in with a no-nonsense agenda and a goal, and when I’m at my best I send my therapist a pre-read. That way, I cover as much as possible with my consultant of the mind. If anyone wants more on this, let me know.

- Family health: On that note of family, I’ve been leaning into chosen family a lot more. I always have to remind myself to get out of my workaholic rabbit hole (I love my job, I love what I do, I love getting after my dreams) and lean on my support network of friends and chosen family. To all those I spent time with in the last couple months, thanks for the quality time. I don’t know if other entrepreneurs are like this but I tend to rabbit hole too much and almost forget that I’m human and need social connection. I’ve been trying to regulate this better. AKA: feel lonely, text or call friends to catch up. Sometimes I get in my head and think I need to have a perfect hangout to rejuvenate that social connection bucket, but oftentimes just a text or call provides so much both ways. Lately, I’ve been just doing this whenever I think of one of my loved ones so I don’t overthink it. It’s been really good for me. Gotta keep the engine going.

Dog Dad: Kenji Update

And of course, one of my primary identities is trying to be the best dog dad I can be. Kenji has grown up to be such a good boy. Months ago, I couldn’t have anybody over because he would be really aggressive and give himself the job of protecting the home, and now he listens to his dad about who is safe and who is not.

He’s also learned to drink from a water fountain! It’s adorable.

He prefers it to the dog bowl situation. I think it’s because he sees me drink from the human oriented one, and thinks it must taste better. Probably just wants to be like his dad.

He has gotten SO big (13 months now) compared to when I first got him (5.5 months). Look at this little derp:

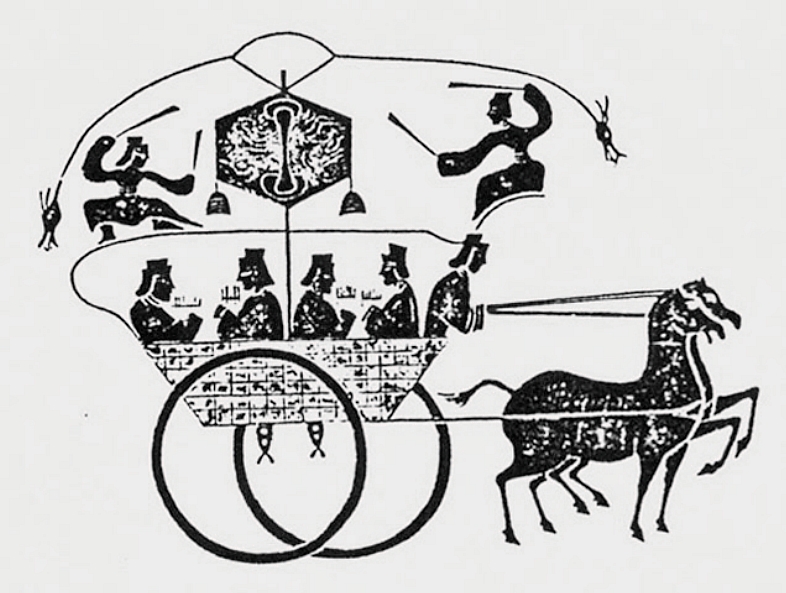

The Original Harness

Every week I try to learn something new about our vast world, and I share it here. Sometimes it’s related to the main article, sometimes it’s just something cool. Enjoy.

The word “harness” entered English around 1300 CE. It meant armor. The protective structure around a knight that let them do dangerous things without dying.

By the 1500s, it had shifted to mean the rigging around a horse. Not the horse itself. The system of straps, reins, and connections that translated raw animal power into directed, useful work.

The horse has the strength. The harness channels it towards the rider or chariot.

Four thousand years ago, the first horse trainers on the Eurasian steppe figured this out. A horse without a harness is beautiful but useless for agriculture, for transport, for civilization. A horse with a harness built the Silk Road. Won wars. Fed cities.

The horse didn’t change. The harness made it productive.

I keep thinking about this as I watch OpenAI, Cursor, and Amazon all ship “agent harnesses” in the same week. They picked the word deliberately. It’s in the tech and AI engineering zeitgeist.

The AI model is the horse. Powerful, fast, capable of incredible things.

Also unpredictable, hard to direct, prone to running off in the wrong direction if left unchecked.

The harness translates raw capability into directed, useful work.

But here’s what the ancient horse trainers knew that we’re still learning: the harness doesn’t just control the horse. It teaches the horse. A well-designed harness provides feedback. Pressure and release. The horse learns which direction to pull. Which pace to maintain. When to stop.

That’s the closed loop.

The companies I wrote about in The Walking Dead of Enterprise Software, they have horses (AI models). Some of them even have harnesses (infrastructure and proprietary curated datasets they own, IP).

But most of them don’t have the feedback mechanism that teaches the horse over time. No pressure and release. No learning loop.

The harness holds the horse in place but doesn’t compound understanding.

The companies that survive will be the ones whose harnesses teach. Where every customer interaction tightens the reins in the right direction. Where the system tomorrow is measurably better than the system today because yesterday’s outcomes fed back into today’s context.

A harness without feedback is a cage. A harness with feedback is a training system.

Four thousand years of evidence.

Thanks for reading.

This week we shipped the first episode of our new podcast series, AI Weekly Clarity under my Self Aligned podcast. We’ll have that out soon on all platforms.

🎙 Subscribe to my podcast on YouTube, Spotify, and Apple Podcasts if you want the latest signal from the noise, weekly.

Me and Jonathan making sense of the landscape together as AI builders on the frontlines.

We’ll try do this every week as an experiment, and if it resonates we’ll keep going.

What harness architecture decisions is your team making right now? Reply and tell me.

I read every response.

Robert

P.S. Full pod episode with screen shares and demos on the Self Aligned podcast soon.

References

- [1]The Next Evolution of the Agents SDKOpenAI · OpenAI Blog · 2026

- [2]Amazon Bedrock + OpenAI Managed AgentsAWS · AWS What's New · 2026

- [3]OpenAI on AWSOpenAI · OpenAI Blog · 2026

- [4]Cursor SDK ReleaseCursor · Cursor Changelog · 2026

- [5]What Is an Agent Harness?Aparna Dhinakaran · Arize AI · 2026

- [6]The Anatomy of an Agent HarnessLangChain · LangChain Blog · 2026

- [7]April 23 PostmortemAnthropic Engineering · Anthropic · 2026

- [8]Harness Engineering for Coding Agent UsersMartin Fowler · martinfowler.com · 2026

- [9]The Walking Dead of Enterprise SoftwareRobert Ta · Self Aligned · 2026

- [10]History of horse-drawn transportWikipedia · Wikipedia · 2026