I sat across from an enterprise AI product lead last week.

She ships an AI agent to 200+ customers in two months. I asked her how she knows whether the agent’s responses are getting better or worse.

She went quiet. Then she said: “We’re evaluating BrainTrust, Arize… we don’t essentially have an eval platform.”

I hear some version of this every week. The team knows quality is the problem. So they go shopping for a platform to fix it. And that instinct, reaching for a tool before defining what you’re measuring, is the trap that derails more enterprise AI products than bad models ever will.

Hamel Husain, who’s helped 30+ companies build AI products (including early work on GitHub Copilot), puts it bluntly: “Unsuccessful products almost always share a common root cause: a failure to create robust evaluation systems.” But here’s the part most teams miss. He also says: “Don’t buy fancy LLM tools. Use what you have first.”

This newsletter walks you through what to do before you buy anything.

🤖 The Signal This Week

The most interesting things I found this week in AI, tech, and startups.

Jensen Huang Wants 100 AI Agents For Every Human at NVIDIA

NVIDIA’s CEO laid out his company’s workforce future: 7.5 million AI agents, 75,000 humans. A 100-to-1 ratio.

Robert’s Take: The ratio grabbed headlines. The harder question didn’t. Those agents need to understand what each human means when they say “pipeline” or “revenue,” and every department defines those terms differently. Jensen’s math assumes context-aware agents. Nobody I talk to has built one yet.

Cursor Hit $2B in Revenue. Anthropic Might Kill It Anyway.

67% Fortune 500 penetration. Fastest-growing company in tech history. And Claude Code is eating into Cursor’s core product from below.

Robert’s Take: If you built your company as a layer on top of someone else’s model, you’re one API update from irrelevance. The companies surviving this will own their context layer. That’s the only durable moat when the model provider can ship the product.

Anthropic Told the Pentagon No. Then Claude Hit #1 on the App Store.

Anthropic refused Pentagon demands for unrestricted military use. The administration blacklisted them. The week after, Claude displaced ChatGPT at #1 on the iPhone App Store. $2.5B annualized revenue.

Robert’s Take: Anthropic ran the experiment every business school debates in theory. Say no to the largest customer on Earth, bet that your values attract a bigger market. The bet paid off. Pretty incredible move. I cancelled my ChatGPT subscription, and I’m all in on Claude myself. That tells you something about what builders want from their tools right now.

Respan Raises $5M to Close the Gap Between AI Evals and Production

YC-backed. Automates prompt updates, regression checks, drift alerts. 1B+ logs per month.

0%

of orgs have agents in production, but quality remains the top blocker

Robert’s Take: Respan looks promising precisely because it closes the loop between observation and action. But even Respan can’t tell you what “good” means for your domain. You still have to define that yourself. The platform is the last step, not the first.

NVIDIA Ships an Open-Source Agent Toolkit. 16 Enterprise Partners Already Use It.

Adobe, Salesforce, ServiceNow, Siemens, Box. OpenShell for policy-based security. AI-Q Blueprint cuts query costs by 50%+ while topping accuracy benchmarks.

Robert’s Take: The infrastructure layer for enterprise agents is solidifying faster than most teams realize. Pay attention to the cost curves. A competitor who does will undercut you. Curated data sets around economically valuable problems will be the moat, and enterprise companies who use agents to set up their companies to optimize for that will win in the long run.

🛠 The Eval Platform Trap (And What to Do Instead)

I talk to enterprise AI teams every week. The conversation follows the same pattern.

They demo the agent. It’s impressive.

Then I ask: “How do you know if this is getting better or worse?”

The room goes quiet.

Sound familiar?

Hamel Husain describes the exact same scene from his consulting work. He walks into a room, asks to see how they measure quality, and gets silence.

Then I ask what they’re doing about it. Nine times out of ten, the answer is: “We’re evaluating platforms.”

This is the trap.

The team knows they have a quality problem. They feel the urgency. So they start shopping. BrainTrust. Arize. LangSmith. Respan. They schedule demos. They compare feature matrices. They debate pricing.

Meanwhile, nobody on the team has spent 30 minutes reading their own agent’s outputs.

Why platforms fail you first

Hamel calls this the “tools trap.” Teams adopt dashboards with generic metrics like “helpfulness” and “coherence,” and those metrics create a false sense of progress. He watched one team celebrate a 10% improvement in their helpfulness score while users were still struggling with basic tasks.

Generic metrics measure things that are easy to measure, not things that matter for your domain.

A legal AI that scores high on “helpfulness” can still cite the wrong statute. A real estate AI with great “coherence” can still fail 66% of the time on date handling.

Off-the-shelf eval rubrics have the same problem. Hamel warns specifically against platforms where “an AI agent both creates an evaluation rubric and then immediately scores the outputs.”

That approach produces high confidence scores that mask underlying failures.

”It is impossible to completely determine evaluation criteria prior to human judging of LLM outputs.”

You literally cannot write your rubric before looking at your data. The criteria emerge from the data, not before it.

What to do instead: the 5-step process

I’ve synthesized Hamel’s framework with what I’ve seen work across the enterprise teams I advise. You can do all of this before buying a single tool.

1. Look at your data. Actually look at it.

Pull 50 real conversations from your trace logs. LangSmith, LangFuse, whatever you have. Read them. All of them.

Hamel recommends spending 30 minutes reviewing 20-50 LLM outputs every time you make a significant change. He says: “Looking at data might feel like clerical work, but it’s often the highest leverage thing you can do.”

Write open-ended notes on every failure you see. Don’t use a rubric. Don’t categorize yet. Just write down what went wrong in plain language.

2. Build your failure taxonomy from the bottom up.

Take your notes and use an LLM to cluster them into categories. Count the frequency of each category.

Hamel calls this “bottom-up analysis” and contrasts it with the “top-down” approach of starting with generic categories like “hallucination” or “toxicity.” Top-down sounds logical but misses domain-specific failures.

When his team did this at NurtureBoss, an apartment-industry AI assistant, they found three issues accounted for over 60% of all problems: conversation flow failures, handoff failures, and date handling errors. Date handling was failing 66% of the time. No generic eval platform would have surfaced “date handling” as a category. It emerged from looking at the data.

Continue reading

Get the full newsletter, free.

Join founders and builders who read Self Aligned every week.

3. Use binary pass/fail. Not scores.

“What makes something a 3 versus a 4? Nobody knows.”

Hamel is adamant on this. Use binary pass/fail for every evaluation. A 1-5 scale creates disagreement, hides uncertainty in the middle values, and is expensive to calibrate across annotators.

Binary forces clarity: did this output achieve its purpose or not? Pair the binary judgment with a written critique explaining why. The critique captures the nuance. The binary creates the signal you can act on.

Before

33%

Date handling pass rate

After

95%

One week of binary evals

No ambiguity. No interpretation needed.

4. Put a domain expert in charge. Not an engineer.

“The people best positioned to improve your AI system are often the ones who know the least about AI.”

Appoint one domain expert as what Hamel calls the “benevolent dictator” of quality. A psychologist for a mental health chatbot. A lawyer for legal document analysis. A real estate veteran for a property search agent. A senior analyst for an enterprise data tool.

This person doesn’t need to understand transformers. They need to understand what a good answer looks like in your domain. Prompts are written in English. The domain expert can iterate on them directly. Engineers should build infrastructure that empowers the expert, not translate their expertise through PowerPoint decks.

5. Build a simple viewer. Not a dashboard.

Hamel’s most emphatic recommendation: build a custom data viewer, not a generic dashboard.

“Every domain has unique needs that off-the-shelf tools rarely address.” You need to see the full conversation, the source context, the business metadata, and the annotation interface on one screen. The friction of clicking through three different systems to understand one interaction kills systematic analysis.

Build it in Streamlit, Gradio, or Shiny. AI-assisted development (Claude Code, Cursor) means you can ship a custom viewer in a day. That viewer will drive more improvement than any platform you demo this quarter.

The enterprise-specific wrinkle: ontology

Everything above applies to any AI product. Enterprise adds one more layer that I keep seeing trip up teams I talk to.

In enterprise, “pipeline” means one thing at a private equity firm and something else at a real estate company. “Revenue” has twelve definitions. Your agent translates business language into data language, and at most companies, a human does that translation manually per tenant.

You can’t grade an agent’s answer when you haven’t agreed on what the words mean for this specific customer. Build a lightweight ontology mapping per tenant: “when this customer says X, they mean Y in your schema.” Score agent answers against it. None of the eval platforms solve this natively. You’ll build this yourself, but it’s the difference between eval scores that mean something and eval scores that don’t.

When to buy a platform

After you’ve done steps 1-5. After you have a failure taxonomy, a domain expert running binary evals, and a custom viewer that your team actually uses.

At that point, you’ll know exactly what you need from a platform. You’ll evaluate Arize, BrainTrust, or Respan against your specific requirements, not against a feature comparison chart. You’ll buy the tool that fills a gap you can name, not one that promises to solve a problem you haven’t diagnosed.

Hamel uses platforms as “a backend data store” alongside his own custom annotation interfaces and Jupyter notebooks. The platform is infrastructure. The judgment is yours.

The cheat sheet

Step 1: Look at data. Read 50 real conversations, write open-ended failure notes. Takes 2-3 hours.

Step 2: Build taxonomy. Cluster your failure notes into categories, count frequency. Takes half a day.

Step 3: Binary evals. Grade pass/fail with written critiques per failure category. Ongoing, 30 minutes per change.

Step 4: Domain expert. Appoint one “benevolent dictator” of quality. Takes one meeting.

Step 5: Custom viewer. Build a simple annotation UI in Streamlit or Gradio. Takes one day.

You can start step 1 on Monday morning.

By Friday, you’ll know more about your agent’s quality than any platform demo could tell you.

You’re welcome.

Next week, let’s talk about Context Graphs.

References

- [1]A Field Guide to Rapidly Improving AI ProductsHamel Husain · hamel.dev · 2025

- [2]Using LLM-as-a-Judge For Evaluation: A Complete GuideHamel Husain · hamel.dev · 2025

- [3]Selecting The Right AI Evals ToolHamel Husain · hamel.dev · 2025

✌🏼 The Orangutan Who Invented Medicine

Every week I try to learn something new about our vast world, and I share it here. Sometimes it’s related to the main article, sometimes it’s just something cool. Enjoy.

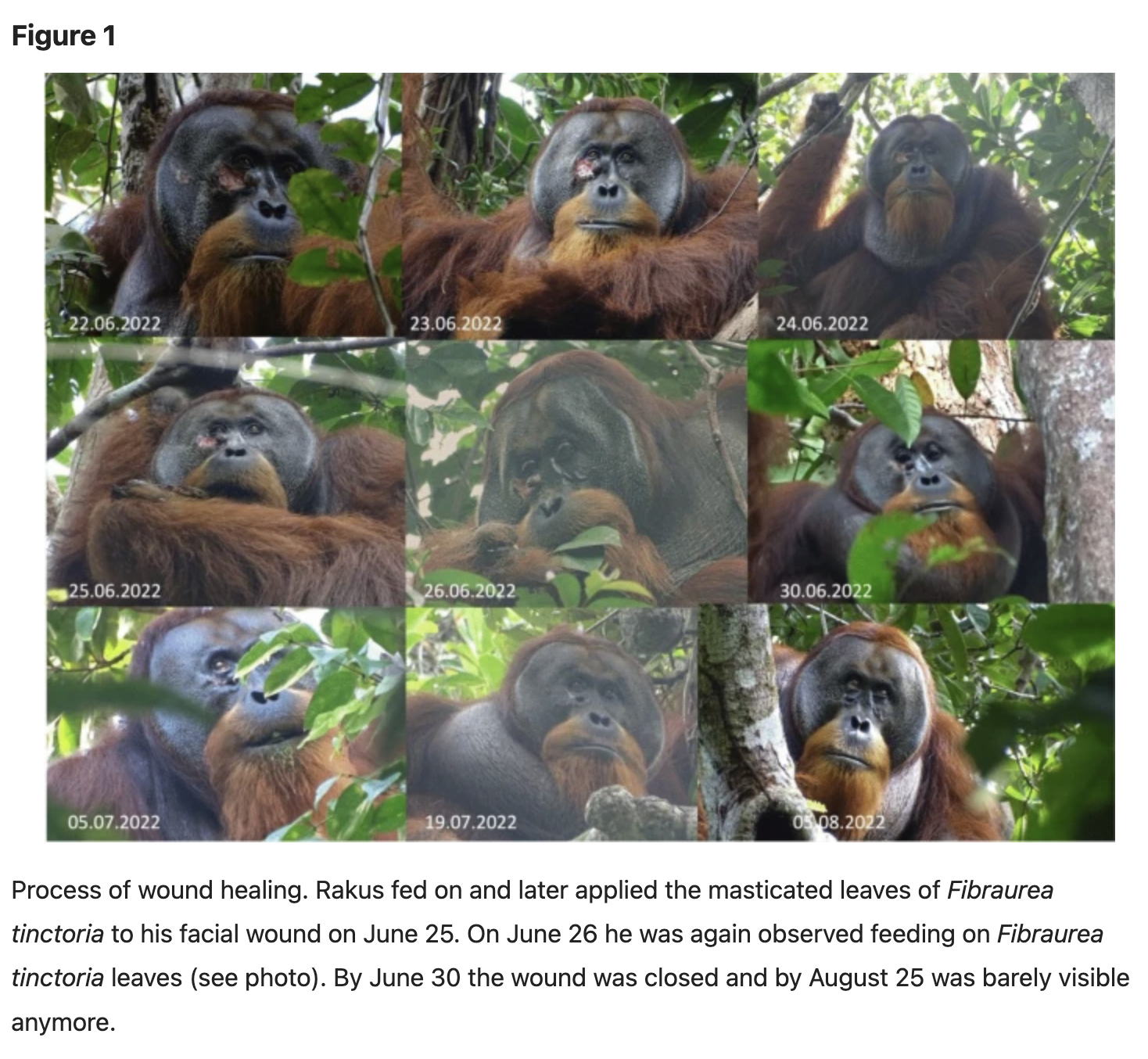

In June 2022, a wild Sumatran orangutan named Rakus got a wound on his face. Probably from a fight.

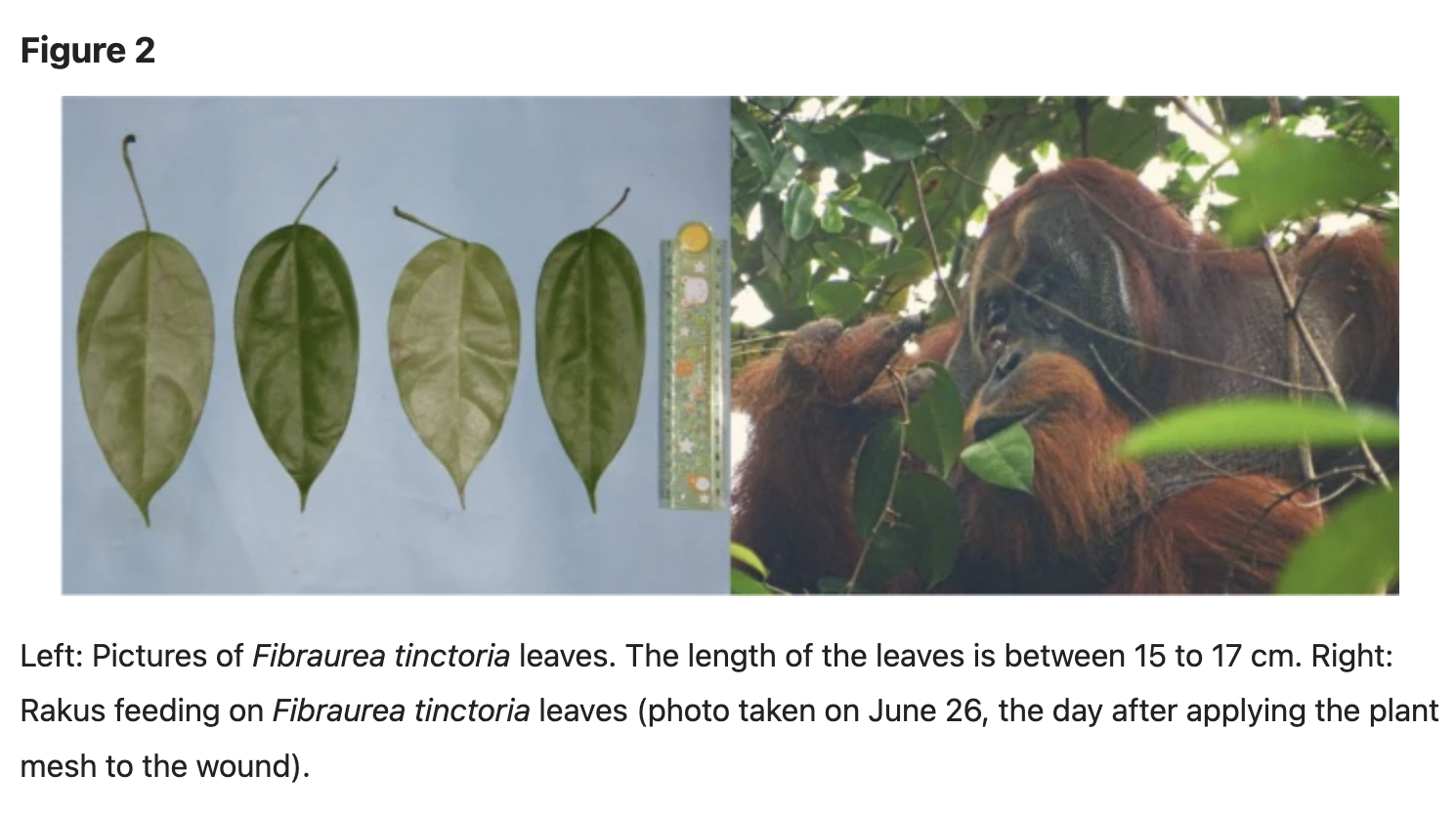

Three days later, researchers at the Suaq Balimbing research station watched him do something no wild animal had ever been documented doing. He chewed the leaves of a specific liana vine called Akar Kuning. He held the pulp in his mouth without swallowing. Then he dabbed it directly onto the wound. Repeatedly. For seven minutes.

Akar Kuning is used in traditional Southeast Asian medicine for its antibacterial and pain-relieving properties. Rakus’s group doesn’t eat this plant. He selected it specifically for this purpose.

Within five days the wound had closed. A month later, no scar.

Isabelle Laumer and Caroline Schuppli at the Max Planck Institute published the findings in Scientific Reports.

They called it the first documented case of active wound treatment with a biologically active plant by a wild animal.

Guess he evaluated correctly.