The largest qualitative study on AI ever conducted.

And the findings got me thinking about the future of work.

Are We Becoming Dogs?

Researchers at the Wolf Science Center in Vienna gave wolves and dogs the same puzzle:

A sealed container with food inside.

Figure out how to open it.

Here’s what made the study famous:

The dogs stopped trying.

When the puzzle got hard, dogs turned around and looked at the nearest human.

Waiting for help.

Waiting for someone to solve it for them.

Sound familiar?

Fifteen thousand years of domestication bred that into them.

Wolves survive by figuring things out.

Dogs survive by being useful to someone who figures things out for them.

The trade worked. Dogs got food, shelter, belly rubs.

They just lost the ability to solve particular problems on their own.

I think about this study every time someone asks me about the future of work.

Anthropic’s research centered around: “How has AI actually changed your life?”

Not theoretically. Not what LinkedIn influencers predict. What 81,000 people experienced.

There were three key findings I’ll share, to make the argument that we should all be concerned with this question fundamentally:

Are we becoming dogs?

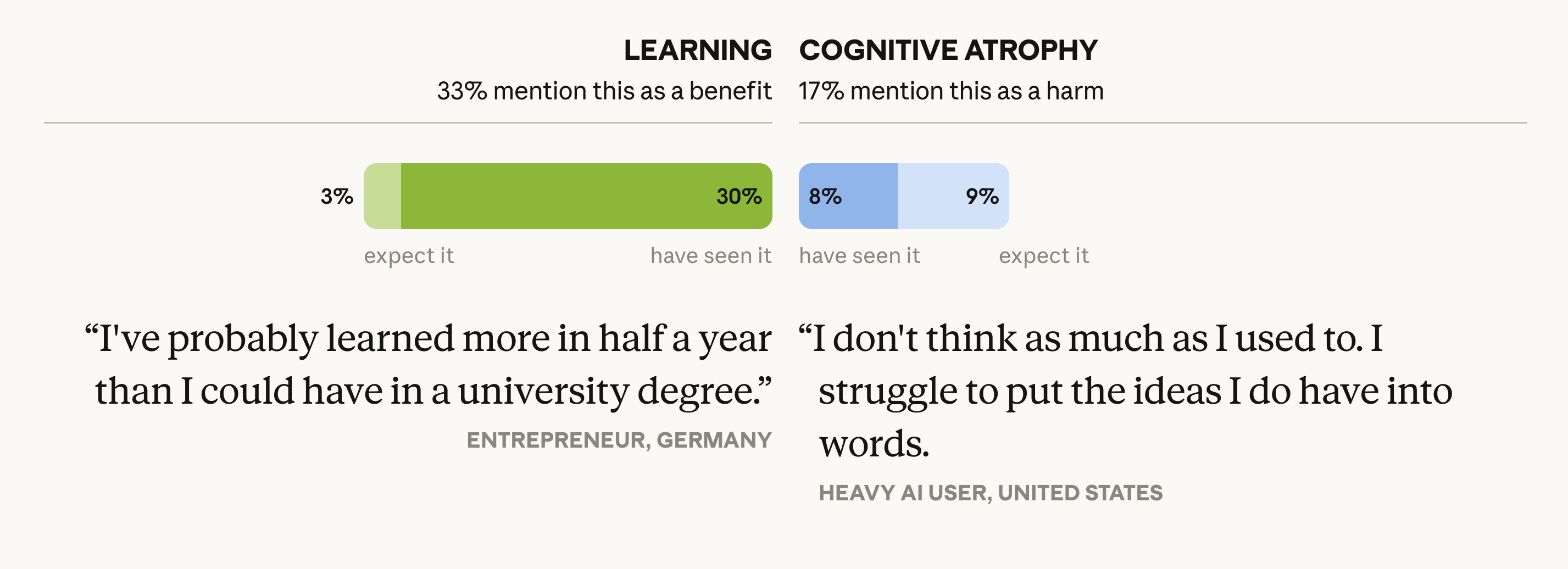

The same capability creates the benefit AND the harm.

0%

said AI made them better learners

0%

felt their cognitive abilities declining

Those groups overlap significantly. Some of the same people who learned faster also worried they were getting dumber.

Dogs didn’t get dumber overnight.

Generation by generation, they traded independent problem-solving for the comfort of looking back at a human.

Each generation was slightly more dependent, slightly less capable on its own.

Each generation also had a better life.

Both things were true.

I see this in my own work.

I build with Claude Code every day. I ship features in hours that would have taken weeks.

I also haven’t written code in eight months. Both things are true at the same time.

It’s slightly worrying.

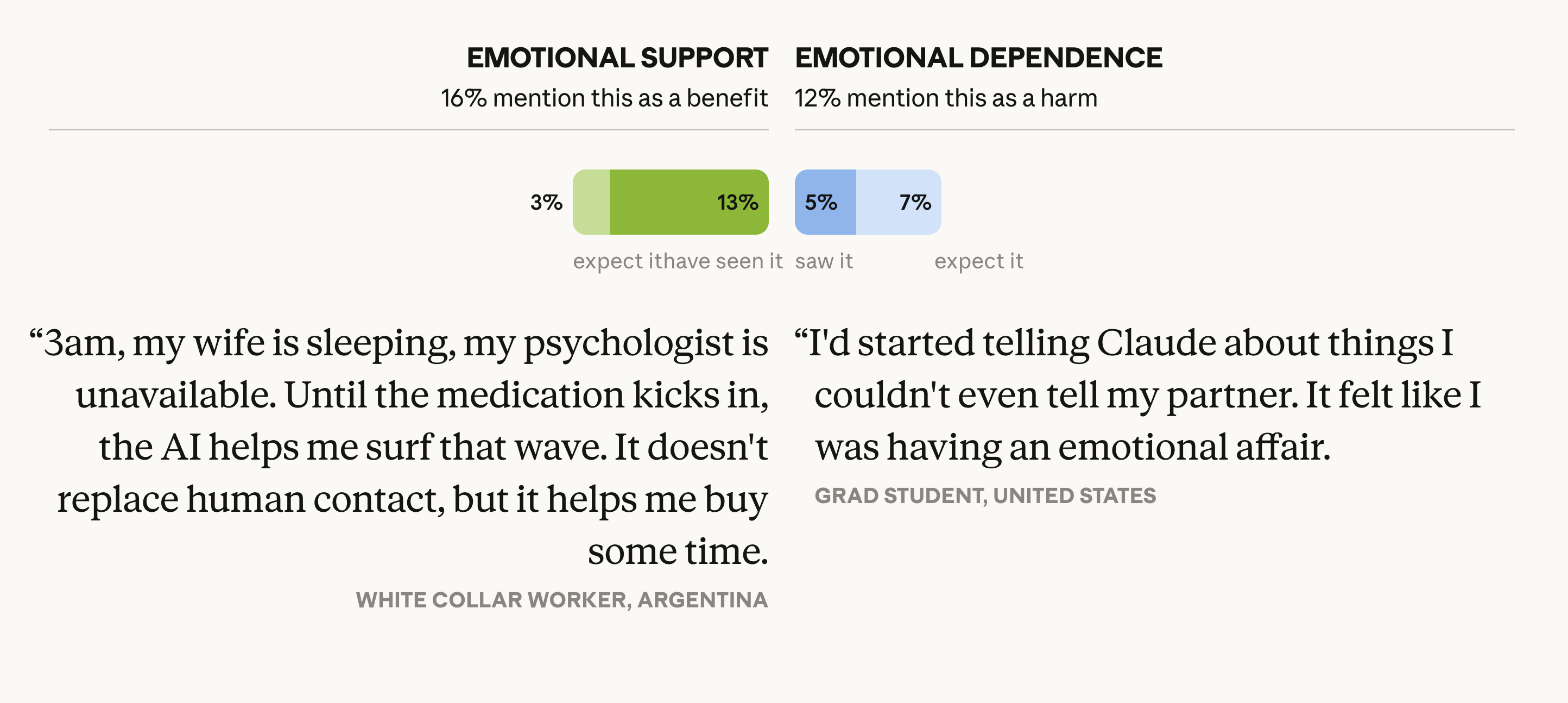

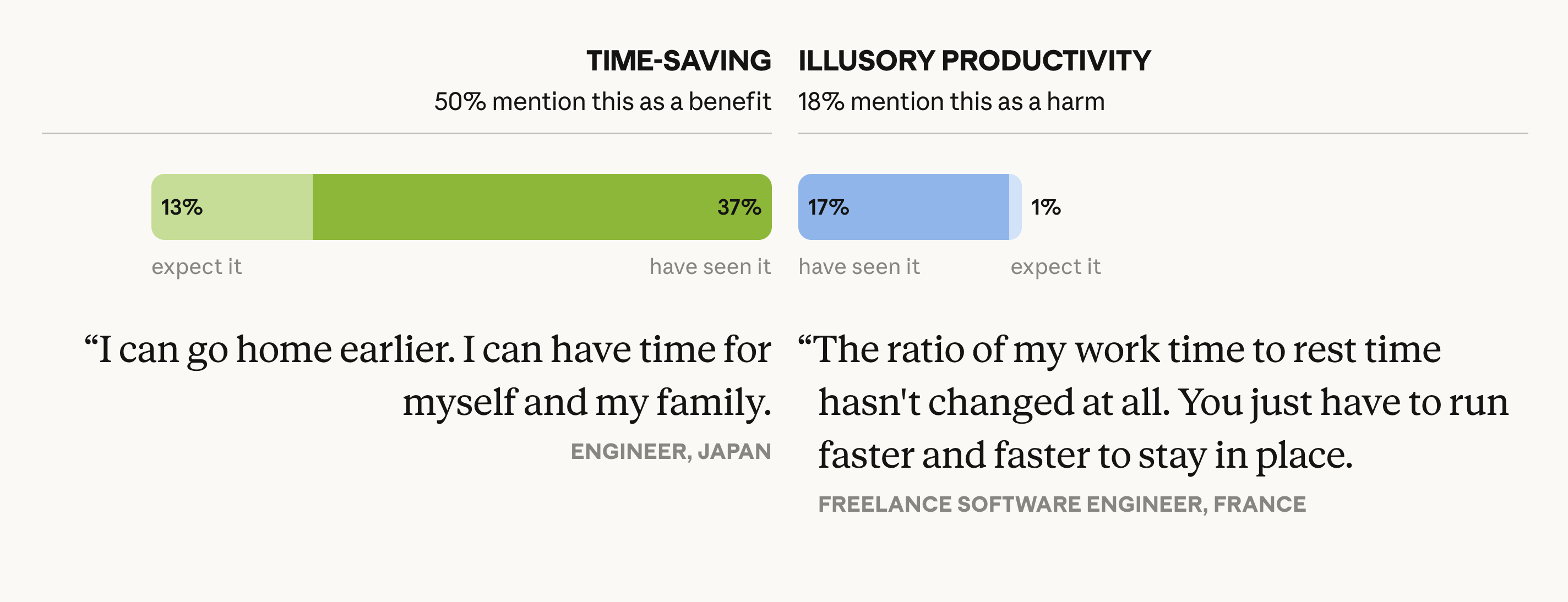

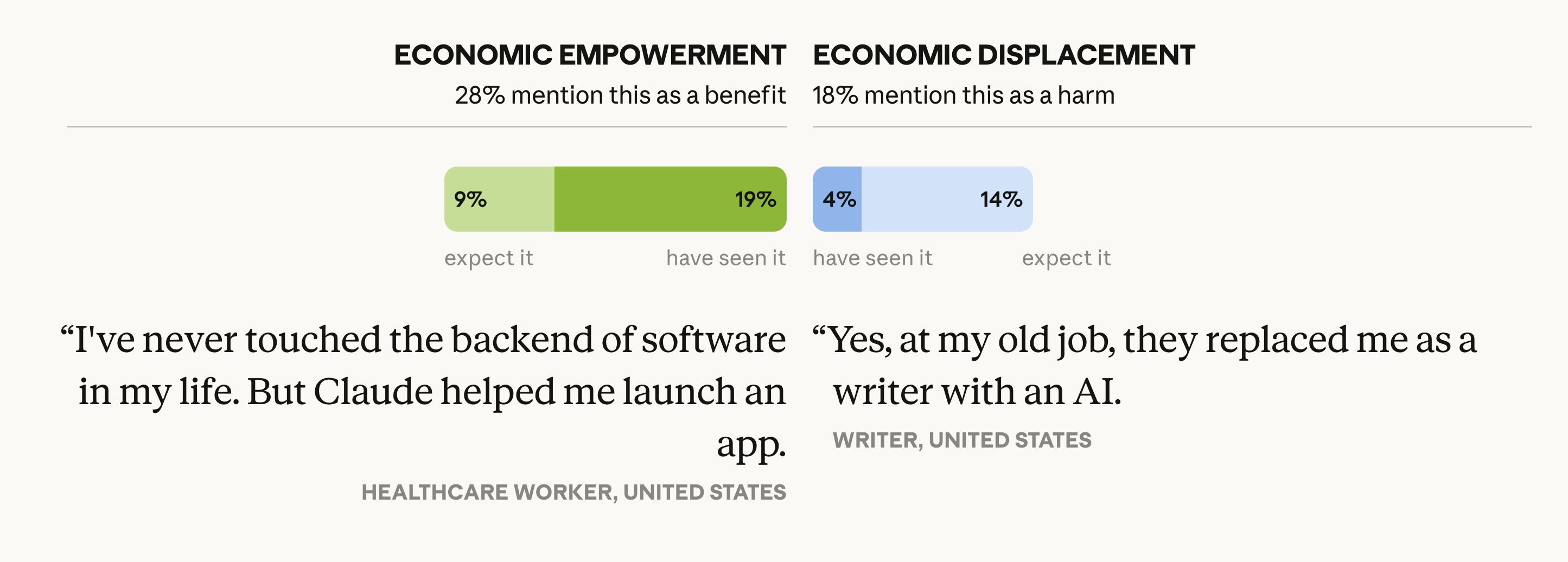

The Anthropic study identified five of these “tensions” where benefit and harm coexist inside the same person.

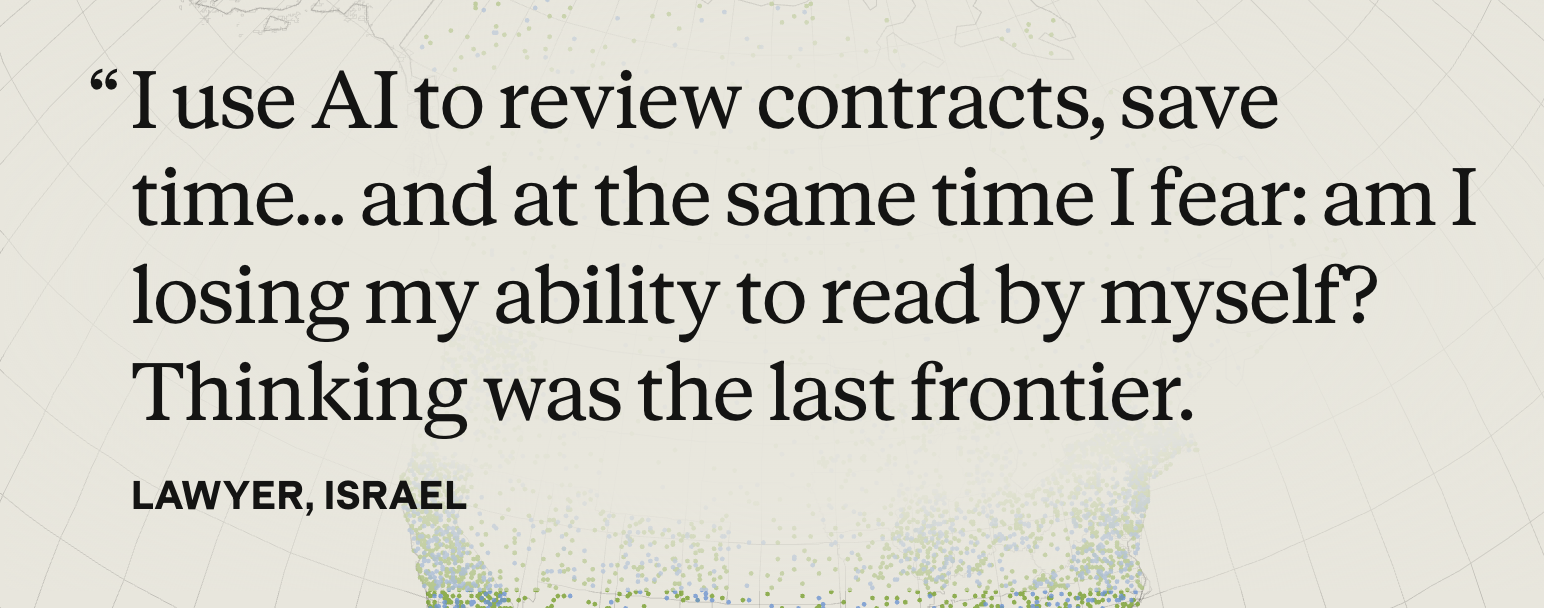

Learning vs. cognitive atrophy.

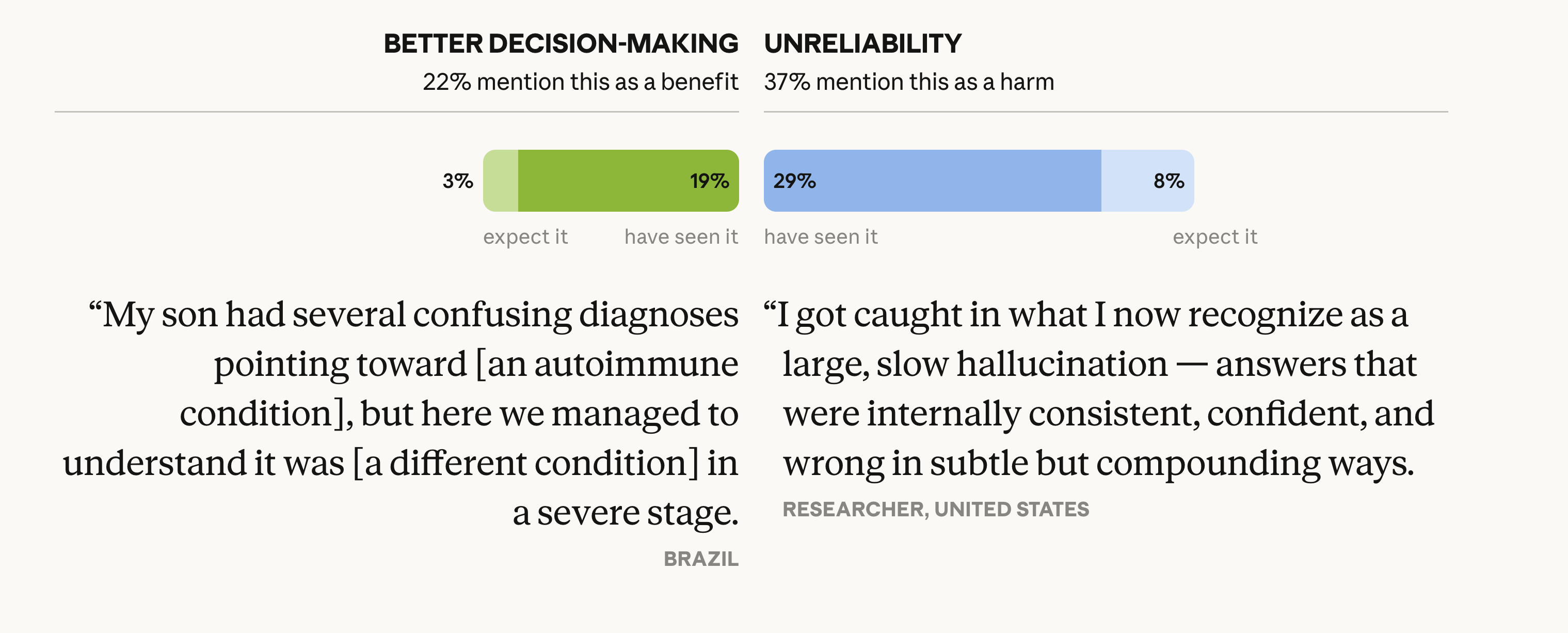

Better decisions vs. unreliable outputs.

Emotional support vs. dependency.

Time saved vs. illusory productivity.

Economic empowerment vs. displacement.

Hope and alarm share a room inside every person using AI right now. We are changing, in real time.

Trading problems for problems

Self-employed

47%

Report economic benefits from AI

Employees

14%

Report economic benefits from AI

3.3x the benefit. Not a rounding error. A chasm.

Why?

Some extrapolated thinking:

Self-employed people choose their own tools, workflows, and priorities.

Employees wait for IT approval, company-wide rollouts, and manager buy-in.

By the time a Fortune 500 company finishes its “AI transformation strategy” slide decks, a freelancer has already rebuilt their entire practice.

I could definitely do CRO (conversion rate optimization) myself. But I’d rather not. I’d rather focus on higher leverage activities. It’s also terribly boring and energy draining for me.

But, it is quite critical and important to optimize our marketing funnel.

Over time, could I even do it anymore?

Or would I just look toward an AI for it and become hyper-dependent?

Perhaps that is the same as putting down one survival task (wolf) to do other tasks (dog).

The #1 aspiration is “professional excellence,” not “replace my job.”

0%

wanted to be better at work they already care about

Not “do my job for me.”

Not “let me work less.”

They wanted to be better at the work they already cared about.

The second most common aspiration?

Personal transformation at 13.7%. Growth. Emotional wellbeing. Becoming a better version of themselves.

The single largest study on AI aspirations found that people want alignment.

They want to become more of who they already are, not outsource who they need to be.

I’ve been building Clarity around this thesis the entire time. 81,000 strangers just validated it without knowing my company exists.

Amazing.

So who wins in the AI economy? The wolves or the dogs?

Every tension the study surfaced comes down to the same question: Does this person know what they want AI to do, or are they letting AI decide for them?

Are they solving the puzzle, or turning around to look at the nearest AI model for the answer?

Learning vs. cognitive atrophy: If you know what you want to learn, AI accelerates you.

If you’re just asking it to “help,” you atrophy.

You literally become dumber. You lose your critical reasoning skills.

How could we optimize and maintain our agency?

What’s the difference in practice?

Managing Agents as a skill

I believe that the more you reinforce the habit of clear writing instructions to your AI, the more you retain your actual critical thinking.

Don’t just chuck your half-assed asks to the AI, just like you wouldn’t (shouldn’t) do that to an employee. You would give clear instructions with the target outcome.

Managing agents requires knowing what good output looks like.

Knowing what good output looks like requires knowing what you’re building and why.

Anyone can vibe code these days.

But creating something of quality, still requires what it has always required:

Quality thinking and quality execution.

Which requires alignment.

The future of work isn’t human vs. AI.

It’s aligned humans with AI vs. everyone else.

We already see it. People are becoming dumber, losing their agency.

Dogs and wolves.

The question isn’t whether AI will change work. It already has.

Continue reading

Get the full newsletter, free.

Join founders and builders who read Self Aligned every week.

The Domestication Nobody Agreed To

Think deeper on the evolution of man’s best friend: the dogs didn’t choose domestication.

No wolf woke up one morning and decided to trade independence for kibble.

It happened across generations.

Slowly.

Each generation a little more comfortable, a little less capable, a little more dependent.

67% of the 81,000 people Anthropic surveyed had net positive views of AI.

They love it. It saves them time. It makes them productive. It handles the hard parts.

Many also reported cognitive atrophy. Dependency. A creeping suspicion that they’re losing something they can’t name.

Both things are true.

Imagine…

You’re a developer who ships 10x faster but also notice you’re annoyed with debugging without AI anymore.

You’re a writer who produced more content and wondered if any of it can actually be truly yours.

We keep framing this as a policy question. Regulation. Retraining. UBI.

The works.

Those questions assume we’ll notice the transition and choose how to respond. The dogs didn’t notice.

The transition was too gradual, too comfortable, too rewarding at each step.

15,000 years of evolution.

We’re speed running it in… 15?

We are already seeing the changes. Just look around.

Look at the kids who grew up with TikTok, examine the way they think and what they prioritize.

Then, tell me that we are not changing.

And here’s the interesting thing to think about…

The wolves in that Vienna lab solved the puzzle 80% of the time.

The dogs solved it 5%.

But…

The dogs had warm beds, regular meals, and someone who loved them.

How many wolves get free meals, and warm beds?

Zooming out, there are roughly 900 million dogs and 300,000 grey wolves in the world today.

By the metrics oriented towards evolutionary fitness, the dogs “won”.

More of them exist today, than their wolf counterparts.

They just can’t solve these particular problems anymore.

I look at my own hands. I ship faster than I ever have. I build things I couldn’t have imagined building alone.

And I haven’t written any custom code in months, and it looks like that will turn into years.

Perhaps one day I will not be able to solve those particular problems anymore.

And as I think these thoughts, I cannot help but wonder what will happen to my future kids.

Somewhere in the back of my mind, a question I can’t shake:

Am I the wolf who found a better tool?

Or the first generation of dog that still remembers being a wolf?

What are we becoming?

References

- [1]When dogs look back: inhibition of independent problem-solving behaviour in domestic dogs compared with wolvesUdell et al. · Biology Letters · 2015

- [2]How 81,000 People Really Feel About AIAnthropic · anthropic.com · 2026